Control charts have two general uses in an improvement project.

The most common application is as a tool to monitor process stability and control.

A less common, although some might argue more powerful, use of control charts is as an analysis tool.

The descriptions below provide an overview of the different types of control charts to help practitioners identify the best chart for any monitoring situation, followed by a description of the method for using control charts for analysis.

Identifying Variation

When a process is stable and in control, it displays common cause variation, variation that is inherent to the process. A process is in control when based on past experience it can be predicted how the process will vary (within limits) in the future. If the process is unstable, the process displays special cause variation, non-random variation from external factors.

Control charts are simple, robust tools for understanding process variability.

The Four Process States

Processes fall into one of four states: 1) the ideal, 2) the threshold, 3) the brink of chaos and 4) the state of chaos (Figure 1).3

When a process operates in the ideal state, that process is in statistical control and produces 100 percent conformance. This process has proven stability and target performance over time. This process is predictable and its output meets customer expectations.

A process that is in the threshold state is characterized by being in statistical control but still producing the occasional nonconformance. This type of process will produce a constant level of nonconformances and exhibits low capability. Although predictable, this process does not consistently meet customer needs.

The brink of chaos state reflects a process that is not in statistical control, but also is not producing defects. In other words, the process is unpredictable, but the outputs of the process still meet customer requirements. The lack of defects leads to a false sense of security, however, as such a process can produce nonconformances at any moment. It is only a matter of time.

The fourth process state is the state of chaos. Here, the process is not in statistical control and produces unpredictable levels of nonconformance.

Every process falls into one of these states at any given time, but will not remain in that state. All processes will migrate toward the state of chaos. Companies typically begin some type of improvement effort when a process reaches the state of chaos (although arguably they would be better served to initiate improvement plans at the brink of chaos or threshold state). Control charts are robust and effective tools to use as part of the strategy used to detect this natural process degradation (Figure 2).3

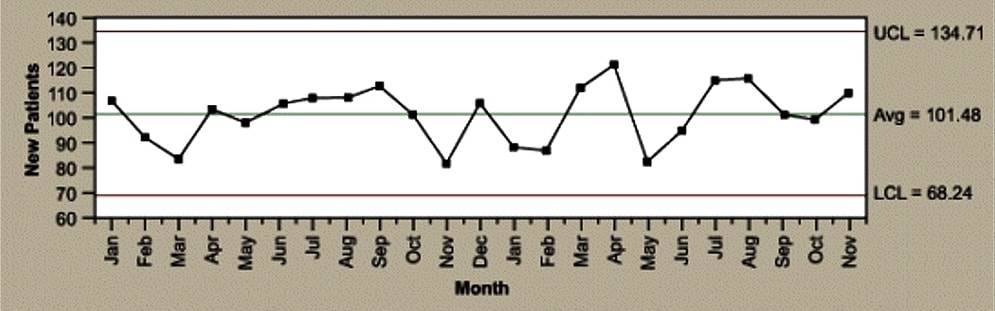

Elements of a Control Chart

There are three main elements of a control chart as shown in Figure 3.

- A control chart begins with a time series graph.

- A central line (X) is added as a visual reference for detecting shifts or trends – this is also referred to as the process location.

- Upper and lower control limits (UCL and LCL) are computed from available data and placed equidistant from the central line. This is also referred to as process dispersion.

Control limits (CLs) ensure time is not wasted looking for unnecessary trouble – the goal of any process improvement practitioner should be to only take action when warranted. Control limits are calculated by:

- Estimating the standard deviation, σ, of the sample data

- Multiplying that number by three

- Adding (3 x σ to the average) for the UCL and subtracting (3 x σ from the average) for the LCL

Mathematically, the calculation of control limits looks like:

![]()

(Note: The hat over the sigma symbol indicates that this is an estimate of standard deviation, not the true population standard deviation.)

Because control limits are calculated from process data, they are independent of customer expectations or specification limits.

Control rules take advantage of the normal curve in which 68.26 percent of all data is within plus or minus one standard deviation from the average, 95.44 percent of all data is within plus or minus two standard deviations from the average, and 99.73 percent of data will be within plus or minus three standard deviations from the average. As such, data should be normally distributed (or transformed) when using control charts, or the chart may signal an unexpectedly high rate of false alarms.

Controlled Variation

Controlled variation is characterized by a stable and consistent pattern of variation over time, and is associated with common causes. A process operating with controlled variation has an outcome that is predictable within the bounds of the control limits.

Uncontrolled Variation

Uncontrolled variation is characterized by variation that changes over time and is associated with special causes. The outcomes of this process are unpredictable; a customer may be satisfied or unsatisfied given this unpredictability.

Please note: process control and process capability are two different things. A process should be stable and in control before process capability is assessed.

Control Charts for Continuous Data

Individuals and Moving Range Chart

The individuals and moving range (I-MR) chart is one of the most commonly used control charts for continuous data; it is applicable when one data point is collected at each point in time. The I-MR control chart is actually two charts used in tandem (Figure 7). Together they monitor the process average as well as process variation. With x-axes that are time based, the chart shows a history of the process.

The I chart is used to detect trends and shifts in the data, and thus in the process. The individuals chart must have the data time-ordered; that is, the data must be entered in the sequence in which it was generated. If data is not correctly tracked, trends or shifts in the process may not be detected and may be incorrectly attributed to random (common cause) variation. There are advanced control chart analysis techniques that forego the detection of shifts and trends, but before applying these advanced methods, the data should be plotted and analyzed in time sequence.

The MR chart shows short-term variability in a process – an assessment of the stability of process variation. The moving range is the difference between consecutive observations. It is expected that the difference between consecutive points is predictable. Points outside the control limits indicate instability. If there are any out of control points, the special causes must be eliminated.

Once the effect of any out-of-control points is removed from the MR chart, look at the I chart. Be sure to remove the point by correcting the process – not by simply erasing the data point.

The I-MR chart is best used when:

- The natural subgroup size is unknown.

- The integrity of the data prevents a clear picture of a logical subgroup.

- The data is scarce (therefore subgrouping is not yet practical).

- The natural subgroup needing to be assessed is not yet defined.

Xbar-Range Charts

Another commonly used control chart for continuous data is the Xbar and range (Xbar-R) chart (Figure 8). Like the I-MR chart, it is comprised of two charts used in tandem. The Xbar-R chart is used when you can rationally collect measurements in subgroups of between two and 10 observations. Each subgroup is a snapshot of the process at a given point in time. The chart’s x-axes are time based, so that the chart shows a history of the process. For this reason, it is important that the data is in time-order.

The Xbar chart is used to evaluate consistency of process averages by plotting the average of each subgroup. It is efficient at detecting relatively large shifts (typically plus or minus 1.5 σ or larger) in the process average.

The R chart, on the other hand, plot the ranges of each subgroup. The R chart is used to evaluate the consistency of process variation. Look at the R chart first; if the R chart is out of control, then the control limits on the Xbar chart are meaningless.

Table 1 shows the formulas for calculating control limits. Many software packages do these calculations without much user effort. (Note: For an I-MR chart, use a sample size, n, of 2.) Notice that the control limits are a function of the average range (Rbar). This is the technical reason why the R chart needs to be in control before further analysis. If the range is unstable, the control limits will be inflated, which could cause an errant analysis and subsequent work in the wrong area of the process.

| Table 2: Constants for Calculating Control Limits | |||

|

n (Sample Size) |

d2 |

D3 |

D4 |

|

2 |

1.128 |

– |

3.268 |

|

3 |

1.693 |

– |

2.574 |

|

4 |

2.059 |

– |

2.282 |

|

5 |

2.326 |

– |

2.114 |

|

6 |

2.534 |

– |

2.004 |

|

7 |

2.704 |

0.076 |

1.924 |

|

8 |

2.847 |

0.136 |

1.864 |

|

9 |

2.970 |

0.184 |

1.816 |

|

10 |

3.078 |

0.223 |

1.777 |

|

11 |

3.173 |

0.256 |

1.744 |

|

12 |

3.258 |

0.283 |

1.717 |

|

13 |

3.336 |

0.307 |

1.693 |

|

14 |

3.407 |

0.328 |

1.672 |

|

15 |

3.472 |

0.347 |

1.653 |

Can these constants be calculated? Yes, based on d2, where d2 is a control chart constant that depends on subgroup size.

The I-MR and Xbar-R charts use the relationship of Rbar/d2 as the estimate for standard deviation. For sample sizes less than 10, that estimate is more accurate than the sum of squares estimate. The constant, d2, is dependent on sample size. For this reason most software packages automatically change from Xbar-R to Xbar-S charts around sample sizes of 10. The difference between these two charts is simply the estimate of standard deviation.

Control Charts for Discrete Data

c-Chart

Used when identifying the total count of defects per unit (c) that occurred during the sampling period, the c-chart allows the practitioner to assign each sample more than one defect. This chart is used when the number of samples of each sampling period is essentially the same.

u-Chart

Similar to a c-chart, the u-chart is used to track the total count of defects per unit (u) that occur during the sampling period and can track a sample having more than one defect. However, unlike a c-chart, a u-chart is used when the number of samples of each sampling period may vary significantly.

np-Chart

Use an np-chart when identifying the total count of defective units (the unit may have one or more defects) with a constant sampling size.

p-Chart

Used when each unit can be considered pass or fail – no matter the number of defects – a p-chart shows the number of tracked failures (np) divided by the number of total units (n).

Notice that no discrete control charts have corresponding range charts as with the variable charts. The standard deviation is estimated from the parameter itself (p, u or c); therefore, a range is not required.

How to Select a Control Chart

Although this article describes a plethora of control charts, there are simple questions a practitioner can ask to find the appropriate chart for any given use. Figure 13 walks through these questions and directs the user to the appropriate chart.

A number of points may be taken into consideration when identifying the type of control chart to use, such as:

- Variables control charts (those that measure variation on a continuous scale) are more sensitive to change than attribute control charts (those that measure variation on a discrete scale).

- Variables charts are useful for processes such as measuring tool wear.

- Use an individuals chart when few measurements are available (e.g., when they are infrequent or are particularly costly). These charts should be used when the natural subgroup is not yet known.

- A measure of defective units is found with u– and c-charts.

- In a u-chart, the defects within the unit must be independent of one another, such as with component failures on a printed circuit board or the number of defects on a billing statement.

- Use a u-chart for continuous items, such as fabric (e.g., defects per square meter of cloth).

- A c-chart is a useful alternative to a u-chart when there are a lot of possible defects on a unit, but there is only a small chance of any one defect occurring (e.g., flaws in a roll of material).

- When charting proportions, p– and np-charts are useful (e.g., compliance rates or process yields).

Subgrouping: Control Charts as a Tool for Analysis

Subgrouping is the method for using control charts as an analysis tool. The concept of subgrouping is one of the most important components of the control chart method. The technique organizes data from the process to show the greatest similarity among the data in each subgroup and the greatest difference among the data in different subgroups.

The aim of subgrouping is to include only common causes of variation within subgroups and to have all special causes of variation occur among subgroups. When the within-group and between-group variation is understood, the number of potential variables – that is, the number of potential sources of unacceptable variation – is reduced considerably, and where to expend improvement efforts can more easily be determined.

Within-subgroup Variation

For each subgroup, the within variation is represented by the range.

The R chart displays change in the within subgroup dispersion of the process and answers the question: Is the variation within subgroups consistent? If the range chart is out of control, the system is not stable. It tells you that you need to look for the source of the instability, such as poor measurement repeatability. Analytically it is important because the control limits in the X chart are a function of R-bar. If the range chart is out of control then R-bar is inflated as are the control limit. This could increase the likelihood of calling between subgroup variation within subgroup variation and send you off working on the wrong area.

Within variation is consistent when the R chart – and thus the process it represents – is in control. The R chart must be in control to draw the Xbar chart.

Between Subgroup Variation

Between-subgroup variation is represented by the difference in subgroup averages.

Xbar Chart, Take Two

The Xbar chart shows any changes in the average value of the process and answers the question: Is the variation between the averages of the subgroups more than the variation within the subgroup?

If the Xbar chart is in control, the variation “between” is lower than the variation “within.” If the Xbar chart is not in control, the variation “between” is greater than the variation “within.”

This is close to being a graphical analysis of variance (ANOVA). The between and within analyses provide a helpful graphical representation while also providing the ability to assess stability that ANOVA lacks. Using this analysis along with ANOVA is a powerful combination.

Conclusion

Knowing which control chart to use in a given situation will assure accurate monitoring of process stability. It will eliminate erroneous results and wasted effort, focusing attention on the true opportunities for meaningful improvement.

References

- Quality Council of Indiana. The Certified Six Sigma Black Belt Primer, Second Edition, Quality Council of Indiana, West Terre Haute, Ind., 2012.

- Tubiak, T.M. and Benbow, Donald W. The Certified Six Sigma Black Belt Handbook, Second Edition, ASQ Quality Press, Milwaukee, Wisc., 2009.

- Wheeler, Donald J. and Chambers, David S. Understanding Statistical Process Control. SPC Press, Knoxville, Tenn., 1992.